A workshop involving two performers was carried out in order to re-evaluate the performative notions of participation and navigation (Dixon 2007), described in post 15. Navigation.

Previously a series of auto-ethnographic enactments (documented in posts August-December 2015) provided some initial feedback on participation and navigation with iMorphia . It was interesting to observe the enactments as a witness rather than a participant and to see if the performers might experience similar problems and effects as I had.

Participation

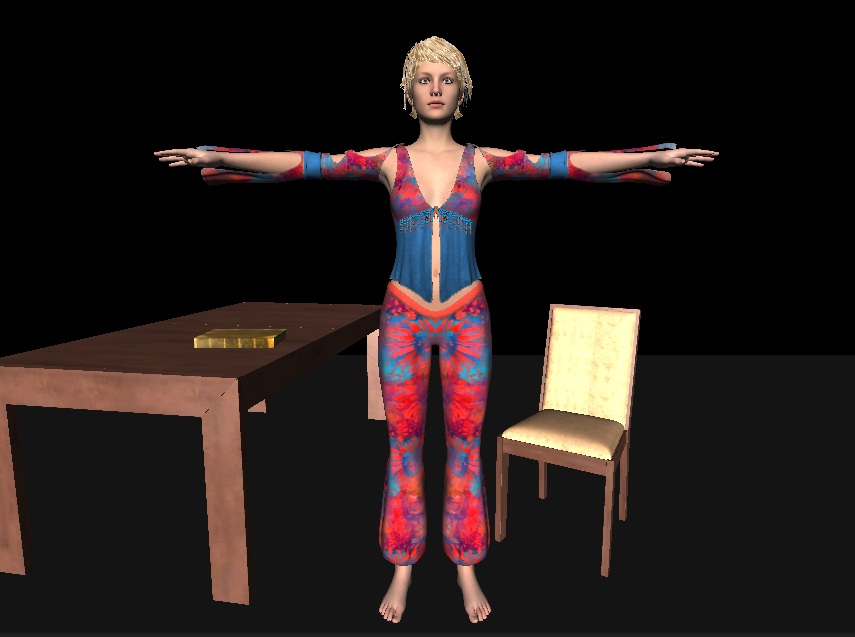

The first study was of participation – with the performer interacting with virtual props. Here the performer was given two tasks, first to try and knock the book off the table, then to knock over the virtual furniture, a table and a chair.

The first task involving the book proved extremely difficult, with both performers confirming the same problem as I had encountered, namely knowing where the virtual characters hand was in relationship to ones own real body. This is a result of a discrepancy in collocation between the real and the virtual body compounded by a lack of three dimensional or tactile feedback. One performer commenting “it makes me realise how much I depend on touch” underlining how important tactile feedback is when we reach for and grasp an object.

The second task of knocking the furniture was accomplished easily by by both performers and prompted gestures and exclamations of satisfaction and great pleasure!

In both cases, due to the lack of mirroring in the visual feedback, initially both performers tended to either reach out with the wrong arm or move in the wrong direction when attempting to move towards or interact with a virtual prop. This left/right confusion has been noted in previous tests as we are so used to seeing ourselves in a mirror that we automatically compensate for the horizontal left right reversal.

An experiment carried out in June 2015 confirmed that a mirror image of the video would produce the familiar inversion we are used to seeing in a mirror and performers did not experience the left/right confusion. It was observed that the mirroring problem appeared to become more acute when given a task to perform involving reaching out or moving towards a virtual object.

Navigation

The second study was of navigation through a large virtual set using voice commands and body orientation. The performer can look around by saying “Look” then using their body orientation to rotate the viewpoint. “Forward” would take the viewpoint forward into the scene whilst “Backward” would make the scene retreat as the character walks out of the scene towards the audience. Control of the characters direction is again through body orientation. “Stop” makes the character stationary.

Two tests were carried out, one with the added animation of the character walking when moving, the other without the additional animation. Both performers remarking how the additional animation made them feel more involved and embodied within the scene.

Embodiment became a topic of conversation with both performers commenting on how landmarks became familiar after a short amount of time and how this memory added to their sense of being there.

The notions of avatar/player relationship, embodiment, interaction, memory and visual appearance are discussed in depth in the literature on game studies and is an area I shall be drawing upon in a deeper written analysis in due course.

Finally we discussed how two people might be embodied and interact with the enactments of participation and navigation. Participation with props was felt to be easier, whilst navigation might prove problematic, as one person has to decide and controls where to go.

A prototype two performer participation scene comprising two large blocks was tested but due to Unity problems and lack of time this was not fully realised. The idea being to enable two performers to work together to lift and place large cubes so as to construct a tower, rather like a children’s toy wood brick set.

Navigation with two performers is more problematic, even if additional performers are embodied as virtual characters , they would have to move collectively with the leader, the one who is controlling the navigation. However this might be extended to allow characters to move around a virtual set once a goal is reached or perhaps navigational control might be handed from one participant to another.

It was also observed that performers tended to lose a sense of which way they were facing during navigation. This is possibly due to two reasons – the focus on steering during navigation such that the body has to rotate more and the lack of clear visual feedback as to which way the characters body is facing, especially during moments of occlusion when the character moves through scenery such as undergrowth.

These issues of real space/virtual space colocation, performer feedback of body location and orientation in real space would need to be addressed if iMorphia were to be used in a live performance.